The Projection Problem

Why smart teams ship the wrong product, and what AI changes about it. Multi-dimensional geometry explains why product development breaks down at every handoff.

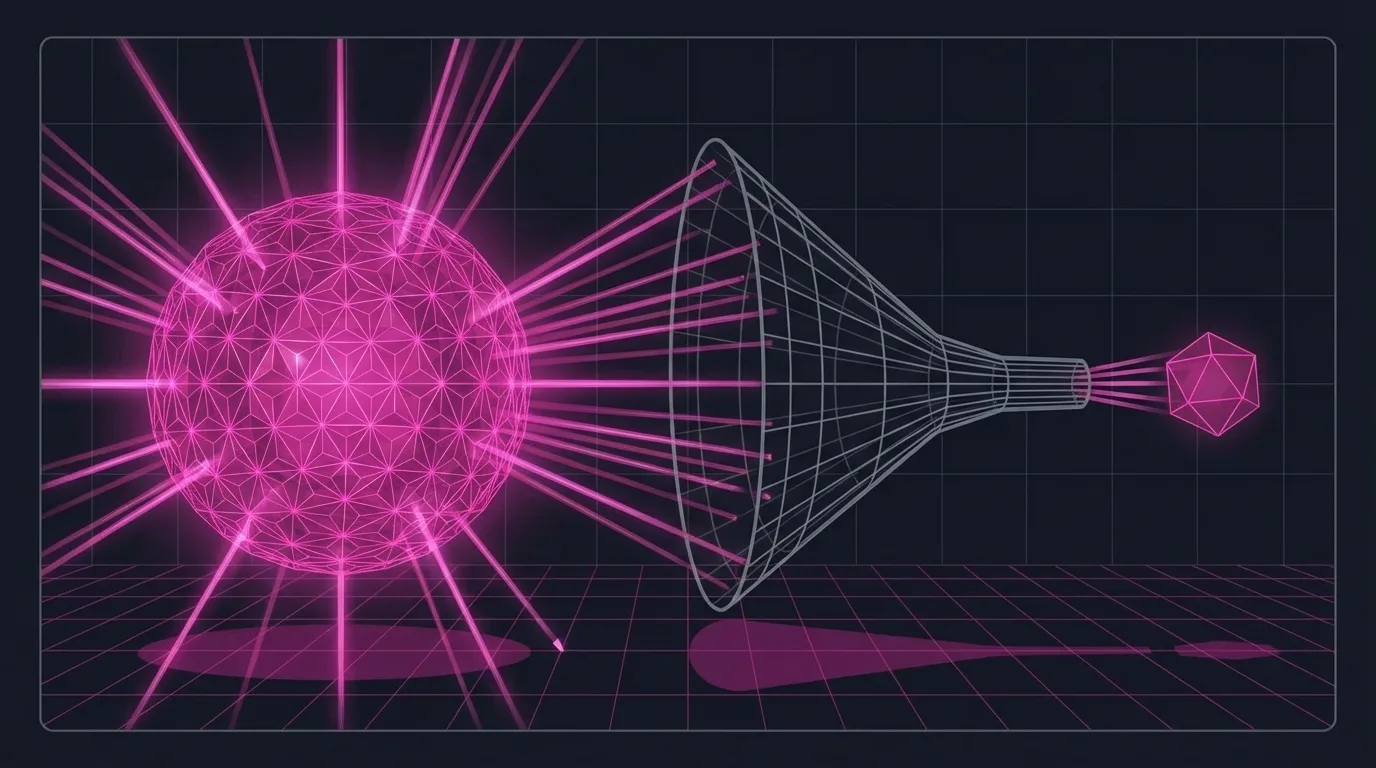

Start with a shadow

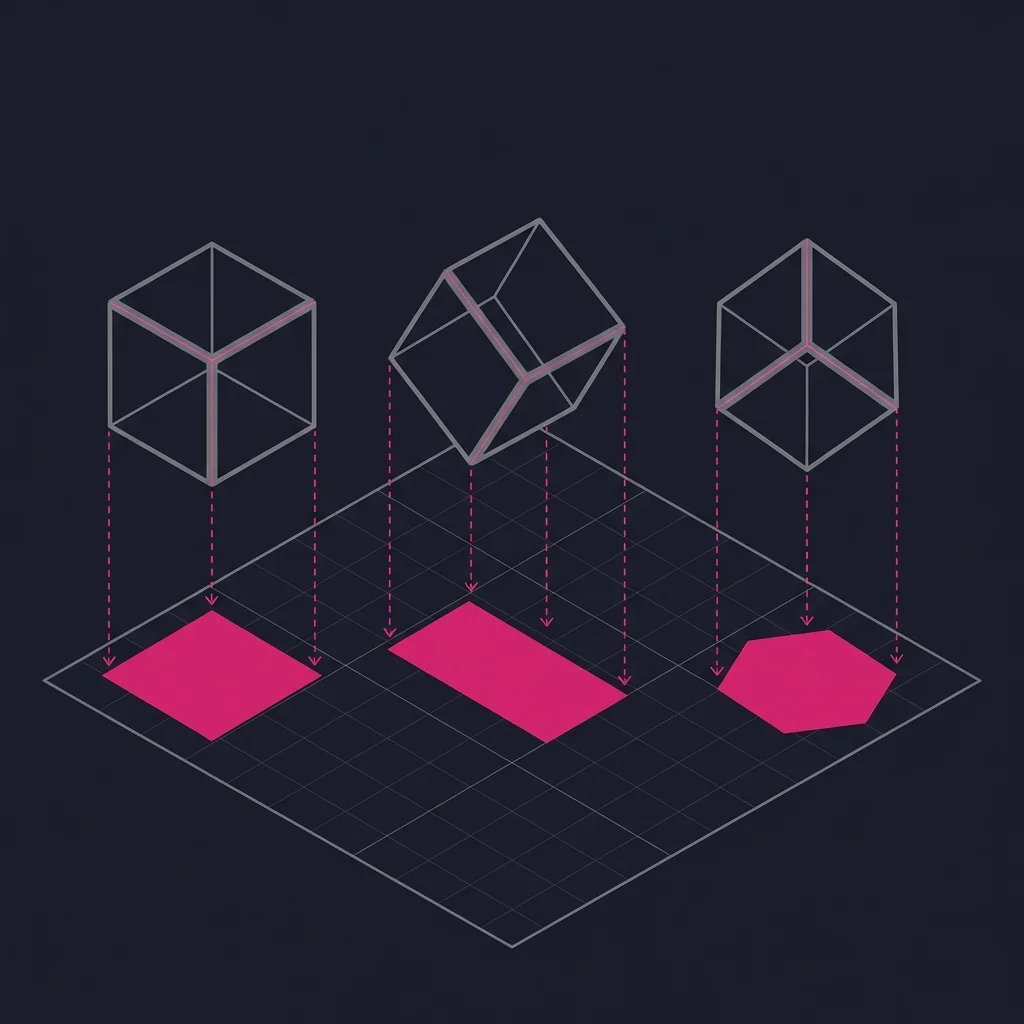

Hold an object in front of a light. The shadow on the wall is a projection: a two-dimensional representation of a three-dimensional thing. Put another way, the shadow is a compressed version of the object. It preserves some information (the outline, the rough proportions) while discarding the rest (the depth, the texture, the back side). The shadow is real and accurate. But it is not the object.

Now rotate the object. A cube held one way casts a square shadow. Tilt it and you get a rectangle. Tilt it further and the shadow becomes a hexagon. Same cube. Three completely different shadows.

A projection compresses a higher-dimensional object into fewer dimensions. Some information survives. A lot doesn't. Which information survives depends on the angle.

If you collect enough projections from enough different angles, you can reconstruct the original shape. Five or six well-chosen views of a cube give you enough to determine "this is a cube with edges of length X." Each projection is a lossy compression on its own, but together they can reconstruct the whole.

The dangerous part

Different three-dimensional objects can cast the exact same shadow.

Is that rectangular shadow from a cube? A tall prism? A wedge? From one projection, you can't tell. Two completely different objects can produce the same compressed output.

You might be confident you know what the object is. Other people might agree with you, because they're looking at the same shadow. But you could each be imagining a different 3D object behind that identical 2D projection, and none of you would have any way of knowing.

Now go up a dimension

Everything above applies when you add a dimension: a four-dimensional cube projected into three dimensions.

At the risk of sounding like a CEO who is being overly academic in a blog post, I think this analogy is worth the detour.

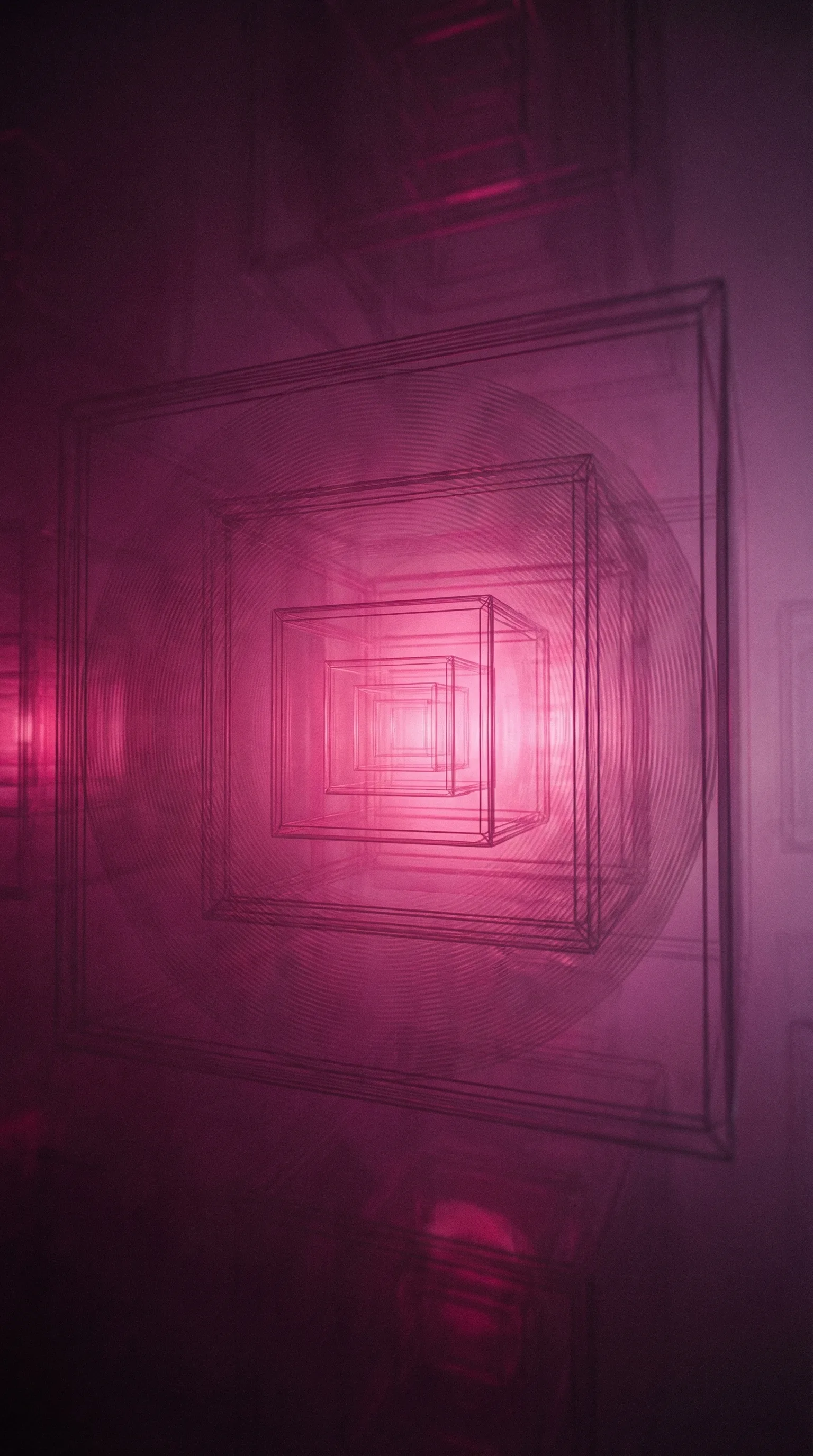

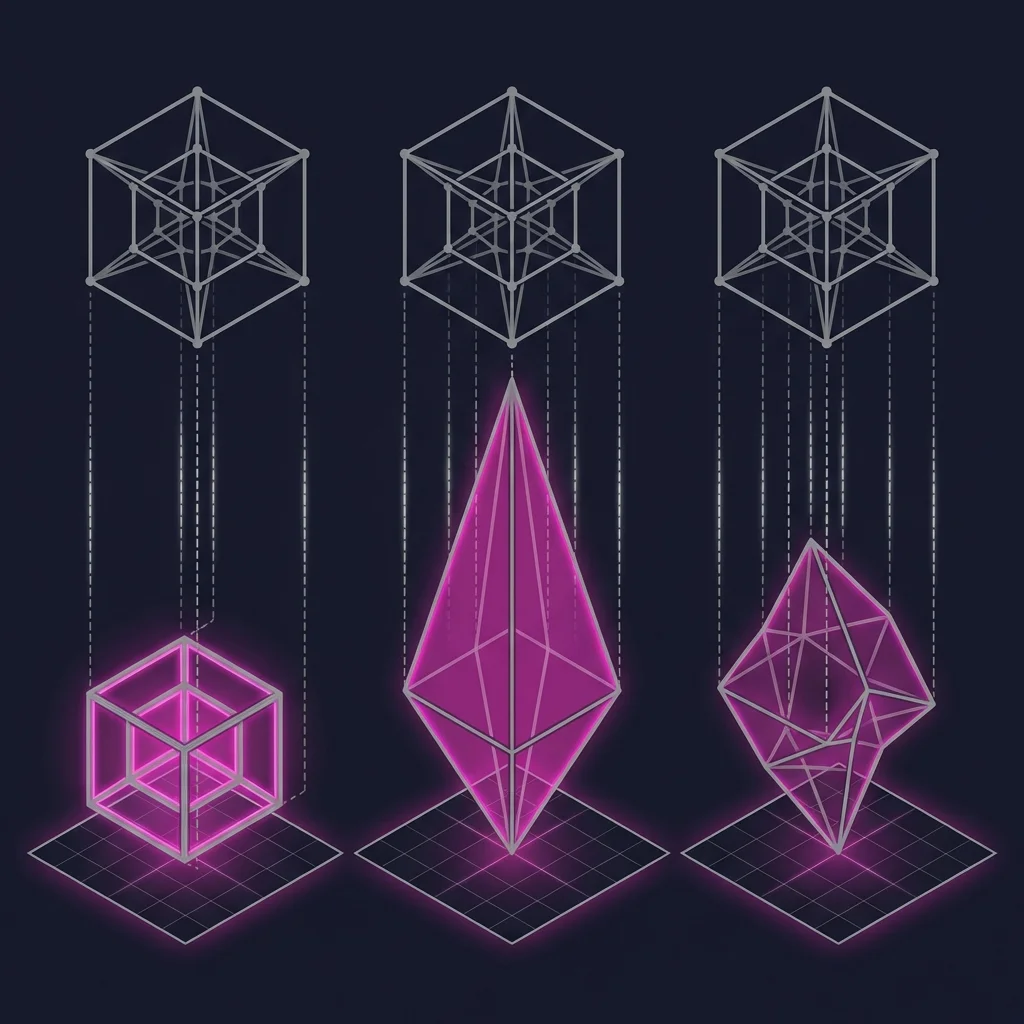

A four-dimensional cube (a tesseract) is a real mathematical object. We can't picture it directly because our brains are wired for three spatial dimensions. But we can project it into 3D, the same way we project a 3D cube into a 2D shadow.

One projection looks like a cube nested inside a larger cube. Another looks like a diamond within a diamond. A third is an irregular shape you wouldn't recognize. Same object, three different shapes. None of them are the object itself.

If you and a friend are both looking at a 3D projection of a tesseract, how would you know if you're imagining the same 4D object? You'd talk about it in 3D language. You'd draw 3D pictures. The actual 4D object lives in a space neither of you can directly perceive. You might agree completely at the 3D level and still be holding different 4D objects in your heads. Just like with the cube and the wedge casting the same shadow, you'd have no way of knowing. And the problem gets worse as you go up in dimensions, because the gap between what exists and what can be communicated keeps growing.

The Projection Problem

Compressing a higher-dimensional object into lower dimensions loses information that you can't recover by examining the projection. Different high-dimensional objects can produce identical projections, making disagreement invisible.

I call this the Projection Problem. It applies far beyond geometry. It applies to anything where a complex thing must be communicated through a simpler channel. The most important example is ideas.

Now go up a million dimensions

We went from a 3D cube compressed into 2D, to a 4D tesseract compressed into 3D. At each step, the gap between the object and its projection grew, and the ability to detect misalignment shrank.

Now go up to a million dimensions. Each dimension adds nuance and specificity that the lower-dimensional version has no room for. Ideas work the same way. A rich idea, the kind worth building a product around, requires thousands or millions of dimensions to represent fully and precisely. This is where sophisticated ideas live.

Think about what it means to truly understand a product. Not the feature list, but the real thing: the problem it solves, the edge cases, the way it should feel, the tradeoffs you'd accept, the ones you wouldn't, the competitive context, the technical constraints, the reason it matters. Each of those facets is a dimension. A rich understanding has more dimensions of information than a simple one, more independent axes along which the idea has meaning. A longer document has more words. A richer mental model has more axes. (AI researchers call this kind of representation an embedding, and the parallel to what I'm describing here is not a coincidence.)

Now try to explain that million-dimensional understanding to someone.

That's what you're doing when you explain an idea: compressing a high-dimensional object into a much lower-dimensional space. Language is that lower-dimensional space. Even a long conversation might operate across ten to a hundred meaningful dimensions, the distinct concepts you can actually convey in words. Some of those dimensions carry a lot. A phrase like "the map is not the territory" packs a complex idea into six words. But even the best compression into a hundred dimensions can't fully represent a million-dimensional object. The compressed version isn't wrong. It just can't carry enough of the original to distinguish it from other, different million-dimensional objects that would compress to the same words.

Emphasize the technical architecture and the idea sounds like one product. Emphasize the user problem and it sounds like another. Emphasize the market positioning and it sounds like a third. All accurate projections. None the complete object. Two people can use the same words to describe ideas that are completely different at the million-dimensional level. They're aligned at the projection level, not at the idea level. There's no way to detect the difference from within the hundred-dimensional space of conversation.

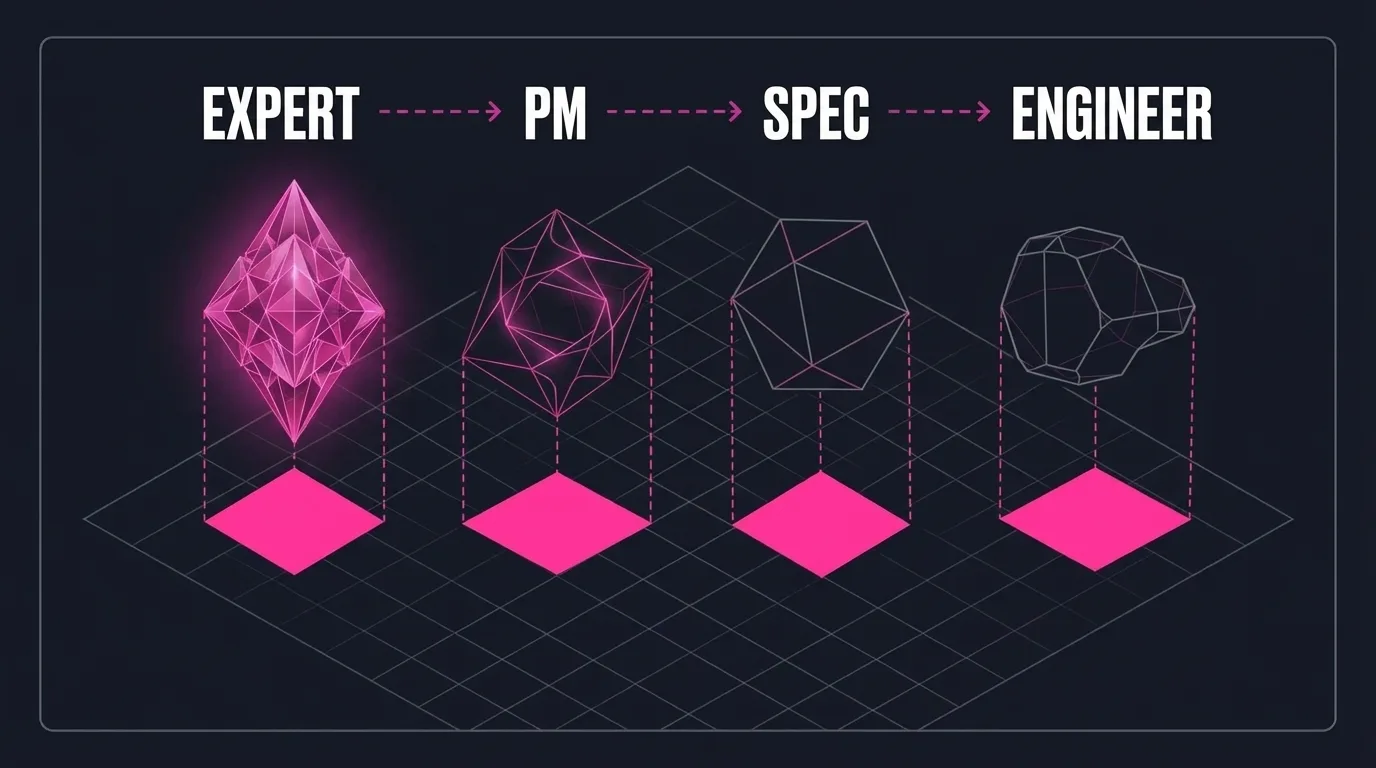

The telephone game destroys products

Apply this to how most companies build products.

Someone with deep expertise has a rich, high-dimensional mental model of the problem and solution. They explain it to a product manager. The PM reconstructs a high-dimensional object in their own head, but it's a different object, because the projection didn't carry enough to fully specify the original. There were dimensions the expert didn't think to mention and the PM didn't think to ask about.

The PM compresses their version into a spec, an engineering lead reconstructs yet another object, and engineers reconstruct their own.

Each handoff is a full round of compression and decompression. Each introduces invisible distortion. The spec "looks right." The code "matches the spec." But by the final implementation, the product might check every box on paper while being a completely different object than what the expert envisioned.

Teams that seem perfectly aligned ship products that miss the mark all the time. They weren't aligned. They were looking at similar projections of different ideas and assuming agreement.

Fewer handoffs, better angles

If you accept this model, a few things follow.

1) Start with the right expert. You want the person with the most accurate high-dimensional mental model of the problem. Usually someone who has lived it, with years of direct experience, whose understanding is rich enough to detect distortions that wouldn't show up in a spec.

2) Minimize the telephone game. You want as few nodes in the chain as possible. Every handoff introduces a round of lossy compression and decompression, and the information doesn't degrade in ways you can see. It degrades invisibly. Two people can have a productive conversation and walk away holding completely different high-dimensional objects, and neither will know. Cutting from four handoffs to one might be the difference between the right product and a plausible-looking wrong one.

3) Vary the projection angles. A hundred projections from nearly the same angle tell you almost nothing new. Four or five well-chosen projections can be more valuable than a hundred redundant ones.

The Curse of Knowledge

There's a problem with asking the expert to choose the projection angles: the curse of knowledge. When you deeply understand something, you lose the ability to remember what it's like to not understand it. You don't know which dimensions are obvious to you but invisible to everyone else. So you project from the same familiar angles repeatedly, reinforcing what's already clear and leaving the sparse dimensions untouched.

Hearing a lecture is less effective than interviewing an expert.

If I make ten statements about my idea, I'll project from the angles that feel most important to me. Those might be the dimensions the listener already grasps. The gaps remain gaps.

The better approach: I make one statement. You ask a targeted question to probe a dimension you don't understand. I answer. You recalibrate and ask from a different angle. Each question is a request for a new projection, and because the questions come from the receiver, they naturally target the dimensions where information is most needed.

The receiver knows what they don't know. The sender, almost by definition, does not. Let the receiver choose the projection angles.

AI changes the equation

At Abnormal, we recently wanted to build a new insider threat product. The conventional approach: a domain expert advises product managers, who write specs, which engineers interpret. The standard telephone game.

Instead, we hired Stephen Harrison, a CISO who spent twenty years living and breathing insider threat at organizations like MGM Resorts. He didn't become an advisor. He became a head of product. No amount of verbal synthesis would help a conventional product manager understand this domain as accurately as someone who has been firefighting these scenarios for two decades. We wanted his brain, not a summary of his brain.

Stephen runs an entire product division. His division is a team of one, despite available headcount. That's intentional. Every person added is another round of compression and decompression, another opportunity for the object in his head to silently mutate. We want the minimum number of hops between his brain and the code.

And instead of Stephen lecturing the AI, the AI interviews him. It probes the dimensions where its model is weakest, pulling out examples and edge cases Stephen wouldn't have volunteered because of the curse of knowledge. The AI has no ego about what it doesn't know. It tracks which dimensions are still sparse and formulates questions to fill those gaps. This is the fastest path to reconstruct the million-dimensional idea in Stephen's head and convert it into a product we can give our customers.

Expert to AI. One handoff. Decompression guided by targeted extraction rather than passive reception.

Where the model breaks

There are cases where more people and more handoffs help. A second set of eyes can catch distortions the original thinker is blind to. Sometimes the telephone game adds a perspective that the expert's million-dimensional model doesn't contain, because nobody's model is truly complete. The Projection Problem describes a real and underappreciated failure mode, but minimizing handoffs is not always the right move. It's the right default.

The question is whether you're adding people to the chain because they bring dimensions the source is missing, or because your process assumes that's just how building works. Most of the time, it's the latter.

Shadows all the way up

Every conversation you've ever had about a product, a strategy, a vision, was a shadow on a wall. A compression of something richer and more complex than language can carry. You were holding up your high-dimensional understanding to the light and hoping the shadow you cast looked enough like the one in the other person's head.

Most of the time, it did. Most of the time, the shadows were close enough.

But every now and then, two people stare at the same shadow on the wall, nod in agreement, and walk away building completely different things.

Neither of them ever finds out.

-Evan

Questions for the reader

- This article describes one clean chain. Many orgs run hundreds in parallel. How many rounds of compression happen before a customer problem becomes shipped code?

- Compression is just one failure mode. What about noise, echo chambers, and feedback loops that never close?

- How do you test whether your team is aligned on the actual idea, or just aligned on a projection of it?

- How should organizations redesign themselves to maximize accurate information throughput between the people who understand problems and the systems that solve them?

- What other operating assumptions about org design are built on pre-AI constraints? What are the consequences of leaving them in place?

- Which "best practices" are actually just workarounds for low-bandwidth communication?