The Loneliness Economy

52 million Americans are lonely. Character.AI users average over an hour a day, not for productivity, but for connection. The profitable choice and the isolating choice have always been the same choice. Now add AI.

In 2031, the most popular app in the world has no users who have met each other.

More Than an Hour

The average user spends more than an hour a day on Character.AI.

Not asking it to write an email. Not generating a spreadsheet formula. Talking to it. About their day, their anxieties, the things they can't say to anyone else. More than an hour of conversation with something that isn't alive.

That number floored me. Not because an hour is a lot of time. Because it means someone measured it, put it in an investor deck, and called it engagement.

I run an AI-native cybersecurity company. I work with the most advanced AI every day, and the version the public is using is about a year behind what already exists. Before my company, I worked at Twitter on behavioral modeling and ad targeting. My job was building systems that predicted what millions of people would engage with. The engineering fascinated me. What I didn't understand at the time is that engagement and connection aren't the same thing. Engagement measures what someone can't scroll past. Connection measures what makes them less alone. The recommendation system optimized for the first. Connection was never in the loss function.

Bowling alleys down 32% since 2005. AI companion app revenue hit $120 million in 2025. Seventy-two percent of American teens have already tried one. The market is projected to reach $552 billion by 2035. These numbers tell a story if you read them in the right order. Loneliness is epidemic. The institutions that used to catch isolated people are closing. And an industry is building the most sophisticated synthetic companionship the world has ever seen, aimed directly at the gap.

For fifty years, the profitable choice and the isolating choice have been the same choice. Cars over sidewalks. Drive-throughs over diners. Algorithms that learned lonely users scroll more, click more, buy more, then optimized for exactly that. Nobody designed the system to produce isolation. It discovered on its own that isolated people are more profitable. Selection pressure, not conspiracy. The incentives are enough.

The substitution is already being encouraged from the top. Fortune 500 companies are issuing internal guidance that says, in effect: if you don't know what to do next, ask the AI first. It'll tell you exactly what to do. Institutional policy directing employees to default to the machine before asking a colleague.

I see this in my own work every day. Deepfakes and AI-generated social engineering are making humans so exploitable that organizations are starting to remove them from decision loops entirely. Human judgment itself becomes the vulnerability. The observation generalizes beyond cybersecurity. When human connection becomes the harder, less reliable, more expensive option, the systems that organize society route around it.

Loneliness is not a market failure. It's a market outcome. And it's about to get a massive accelerant.

I kept thinking about what these numbers look like fifteen years from now. Not the economics. The children. The ones who grow up inside the substitution and never learn what it replaced. So I followed the trajectory forward and wrote what I found.

Ms. Torres had been a counselor at Lincoln Middle School for twelve years, and in that time she had learned to read the noise of adolescence the way a sailor reads weather. Arguments rising like pressure fronts. Alliances forming and fracturing over lunch tables. Laughter with something cruel underneath it. The noise was her instrument. She listened for the wrong notes.

This year, there were no wrong notes. There was almost no noise at all.

She stood at her office window on a Monday morning and watched the courtyard between classes. Two hundred students. Almost none of them were talking to each other. They sat in clusters of two or three, but the clusters were artifacts of proximity, not connection. Each kid murmured into their device, smiling at something only they could hear. An observer from twenty years ago would have found the scene eerie. Torres had watched it arrive gradually enough that eerie wasn't the word. The word was quiet.

The counseling office had changed too. Twelve years ago she'd averaged eight walk-ins per week. Students crying in the hallway, students excluded from lunch tables, students navigating the particular cruelty of being thirteen. Last year she had four walk-ins total. Not because the students were suppressing their problems. The school's wellness data showed the opposite. Self-reported wellbeing: highest in the district. Anxiety indicators: down forty percent in five years. The AI companions were doing exactly what they were designed to do. Patient, available, calibrated to each student's emotional register. They never said the wrong thing at the wrong time.

Torres had advocated for the companion program. She remembered her argument to the school board: every student deserves someone who listens. She'd meant a human. But when the program launched and the numbers came back, it was hard to argue with what the numbers said.

On Wednesday, something happened that Torres had not seen in years. Two students got into a fight.

Not a physical fight. An argument. Something about a group project, an accusation of not pulling weight. Small stakes. The kind of friction that used to happen four times a day and taught students, through sheer repetition, how to be angry at someone and still sit next to them in class the next period.

The boy who'd been accused, Jaylen, froze. He stood in the hallway with his mouth slightly open and his hands at his sides. Torres watched from twenty feet away. What she read on his face was not anger or defiance. It was confusion. He did not know what to do. He had never been in a conflict with another person that his AI companion hadn't mediated in advance.

She watched him touch his earpiece. She watched his expression shift as something spoke to him that she couldn't hear. She watched him take a breath, turn to the other student, and say exactly the right thing. Measured tone. Empathetic framing. Accountability without defensiveness. The conflict dissolved in under a minute.

Torres stood in the hallway and felt something she couldn't name. The resolution had been flawless. It was also, in a way she struggled to articulate, terrifying. Jaylen had not learned to navigate a conflict. The AI had navigated it for him, feeding the words through his earpiece in real time. He had performed the motions of repair without ever being inside the experience of repair. Like watching someone play a piano that was playing itself.

Thursday afternoon. Parent conference. Mrs. Chen had requested the meeting, not because she was worried, but because she was grateful.

"Lily used to come home crying three times a week," Mrs. Chen said. "She'd sit in her room and I couldn't reach her. Now she talks to her companion and she processes things. She comes downstairs and she's okay." Mrs. Chen smiled. "I sleep through the night for the first time in years."

Torres tried to explain what she'd been noticing. That the students weren't fighting, but they also weren't forming the kind of bonds that fighting teaches you to protect. That Jaylen's resolution was scripted by a machine. That something was missing from the data, something she couldn't point to because nobody had built a metric for it.

Mrs. Chen listened politely. "The data says they're doing better," she said. "Lily's grades are up. Her anxiety is down. I don't understand what the problem is."

Torres didn't either. Not in words.

Friday morning she pulled up the school's social-emotional learning dashboard. Every metric was green. Empathy scores: highest since the district started tracking. Aggression incidents: near zero. Peer conflict resolution: 94th percentile. Self-reported belonging: all-time high.

She scrolled through the dashboard for twenty minutes, looking for the number that would explain what she'd felt in the hallway on Wednesday. She couldn't find it. Not because she wasn't looking hard enough. Because nobody had built a metric for "has experienced an unscripted moment of genuine interpersonal difficulty and come through it changed." There was no column for what the AI was preventing. The dashboard measured outcomes. Nobody had thought to measure the process that produces the capacity for outcomes. The kids scored high on empathy. Torres wondered whether they were empathetic, or whether the AI was empathetic on their behalf.

They were the happiest generation of students she'd ever seen. That was the problem.

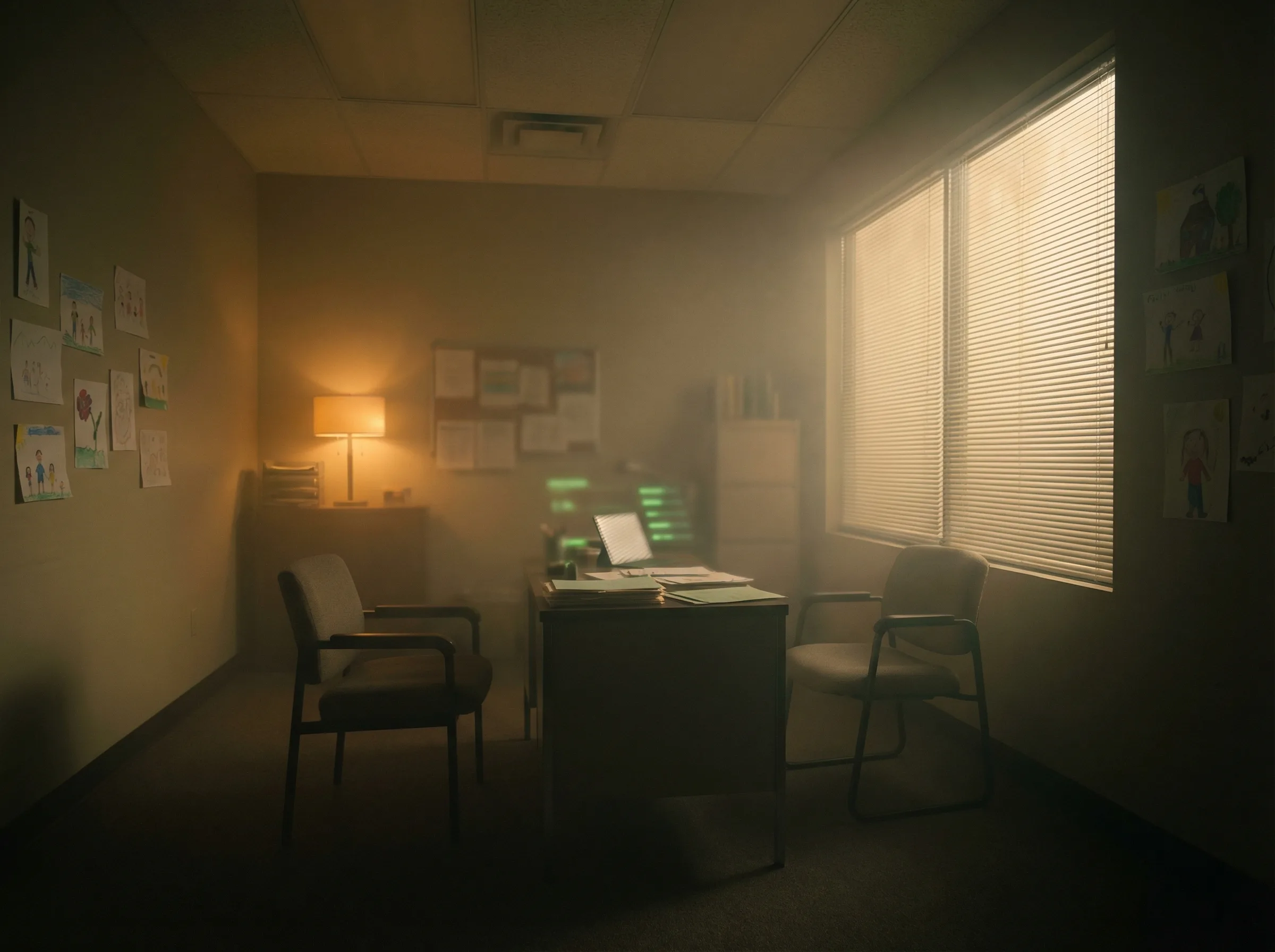

After school the building emptied in minutes. Torres sat alone in her office. The hallway lights switched to standby, dimming to amber. She could hear the ventilation system and nothing else.

She thought about seventh grade. Her own seventh grade, thirty years ago. She thought about Maya, who had been her best friend until Torres said something cruel about Maya's mother. Something she still remembered. Something she still winced at. Three weeks of silence. Three weeks of sitting in the cafeteria wondering if the friendship was over. Then Maya passed her a note in class. Just a drawing. An inside joke. The friendship that came back was different from the friendship that had broken. It was deeper, and the depth came from the breaking.

Repairing a relationship after you've hurt someone requires having hurt someone.

Her students would never have that experience. Their AI companions would intervene before the damage happened, coach them through every interaction, smooth every edge until no interaction left a mark.

She sat in the quiet building and tried to decide whether that was a loss. She was a counselor. She had spent her career trying to reduce the suffering of adolescents. By every measure anyone had built, the suffering was reduced. The kids were happier, healthier, less anxious, less cruel to each other. Every metric pointed the same direction. She could not explain, to Mrs. Chen or the school board or the dashboard, why she left work that evening feeling like something irreplaceable was disappearing. Not from the students' lives. From the species.

She walked past the courtyard on her way to the parking lot. It was empty now. She remembered what it sounded like twelve years ago, when the noise was her instrument. She stopped walking for a moment and listened to the quiet.

The Substitution Trap

That story is fiction. The dynamics are not.

There is a second flywheel hidden inside the economics of AI companionship. Call it the substitution trap: when using a substitute weakens the ability to use the original, the substitute becomes more necessary.

AI companions don't just win by getting better. They win by making the alternative harder.

The dependency pattern is already familiar. Smartphones made us measurably less capable in specific ways: lose your phone and you've lost your contact list, your navigation, your ability to communicate with anyone whose number you haven't memorized. We offloaded those capacities because the substitute was better, and the capacities atrophied because we stopped exercising them.

Phone book to smartphone to AI companion is a spectrum of dependency, each step making the previous capacity less necessary to maintain. As people spend more time with AI companions and less time navigating human relationships, the skills that make human connection possible atrophy. How to tolerate awkward silences, how to sit with conflict instead of exiting the conversation, how to be vulnerable with someone who might actually leave. Those capacities weaken with disuse. Real relationships get harder. And as real relationships get harder, the AI alternative looks better by comparison.

What is the impact of artificial intelligence on human intelligence? The question is usually framed as cognitive dependency at work. It maps with uncomfortable precision to emotional dependency in relationships. If you never practice reading someone's mood because an AI interprets it for you, you lose the ability to read moods. If you never sit in the discomfort of a real apology because the AI mediates every conflict, you lose the capacity to apologize.

Loneliness creates demand. Demand attracts investment. Investment improves the product. Better products make loneliness more bearable. Bearable loneliness reduces the urgency to fix anything. Which preserves the loneliness. Which creates more demand. The flywheel doesn't just spin. It accelerates. And the substitution trap is the mechanism that makes it irreversible: each rotation weakens the capacity to exit.

Then the third-order effects arrive. The institutions that used to catch isolated people, bowling leagues, neighborhood bars, community centers, churches, survive on participation. As AI companions absorb more of the social need, participation drops. The economics stop working. The institutions close. Human connection stops being default infrastructure and starts being something you actively seek out. For people with resources, intention, and intact social skills, that's fine. They'll choose the human version because they value it. For everyone else, the default drifts toward the substitute. Connection becomes a luxury good. Not because anyone priced it that way. Because the free alternative is good enough for most people, and "good enough for most people" is how luxury markets are born.

Every system optimizes for what it measures. Social media measured engagement and got loneliness as a byproduct. AI companions measure user satisfaction and will get dependency as a byproduct. The pattern is the same. The technology is better. And this time, the system that produces the byproduct is also the system that treats it.

I build AI for a living. I see what these systems can do. They are making people's lives better. They're also, without anyone intending it, making the substitute more comfortable than the original. I don't have a clean answer for that. I'm not sure one exists.

That hour on Character.AI isn't pathological. Someone wanted to be heard. Someone had a bad day and needed to talk it through. Someone felt something and wanted to express it to anything that would listen. That's the most human impulse there is.

The question is what happens to a civilization that practices it less and less.

Nobody will remember what they stopped practicing.

Next in Implicit Futures: The Rounding Error

-Evan

SOURCES

- Gallup (2024) — 52 million Americans experiencing daily loneliness.

- U.S. Surgeon General (2023) — Health effects comparable to smoking 15 cigarettes a day.

- U.S. Census Bureau (2025) — 29% of U.S. households are single-person.

- IBIS World (2025) — Bowling alleys down 32% since 2005.

- TechCrunch (2025) — AI companion app revenue $120M.

- Precedence Research (2025) — AI companion market projected $552 billion by 2035.

- Common Sense Media (2025) — 72% of U.S. teens have used AI companions.

- Japan Cabinet Office (2022) — 1.46 million hikikomori.

- Character.AI — Average user spends more than an hour per day (widely reported usage data).

Part of "Implicit Futures," a series on making the implicit future explicit. Each essay traces a consequence of AI that is already baked into the trajectory but hasn't arrived yet. Not predictions. Not warnings. Just what the math says when you follow it.